In context: Eight radio telescopes trained at the center of the Messier 87 galaxy each recorded 350TB of data per day for one week. That provided the scientists with five petabytes of data stored on high-performance helium-filled hard drives to process. An amazing cache by anyone's standards.

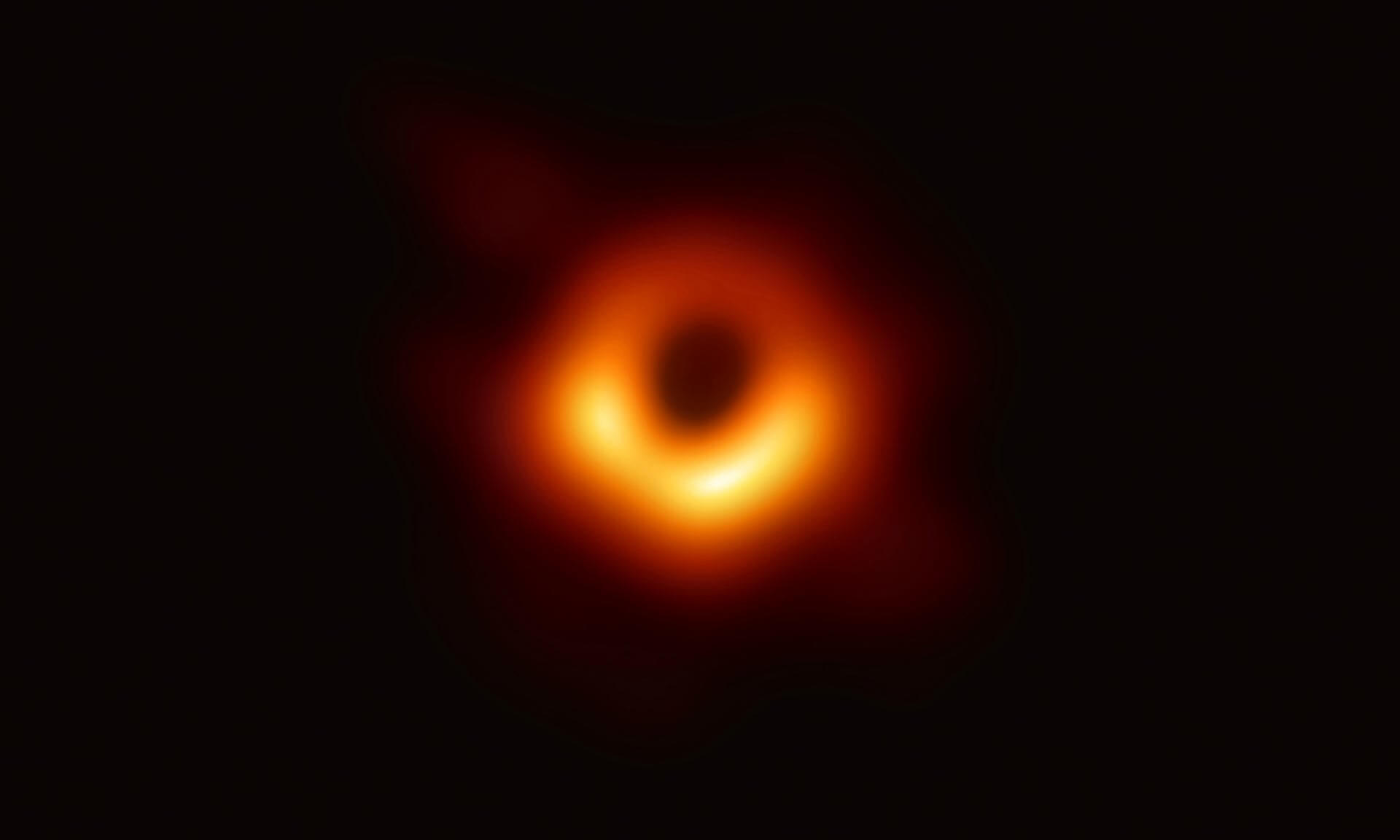

On Wednesday, we reported that scientists had taken the first image ever of a black hole. It was big news in the astronomy community. It was also apparently big news in the data-hoarding community as well.

Subreddit DataHoarder was buzzing after one of the scientists working on the black hole project tweeted a picture of his colleague, Katie Bouman, posing with some of the hard drives that held the image data.

She stood behind eight towers that appeared to hold eight drives each. Presumably, this was just one cache as 64 HDDs could only hold 5PB if they were 80TB capacity, something drive makers have not achieved yet.

"The Black Hole photo is very impressive, but I'm more interested in where they got these 80TB drives," said one Redditor.

This is her with the hard drives containing the image data for the Black Hole. pic.twitter.com/unOud3msk2

--- Muhammad Karim (@mkarim) April 10, 2019

Another poster posited that they used 12TB drives and that this was only one-eighth of the data.

"Well that makes sense as the data was captured across 8 different telescopes, so this is 1/8th of the whole setup," said ThomasTheSpider.

The black hole image was put together using data from eight radio telescopes from around the world. Each telescope gathered massive amounts of information on its own. All totaled the scientists were dealing with more than five petabytes of data --- enough to store 5,000 years worth of MP3s.

Or as one data hoarder put it, "Black holes are cool, I guess, but imagine all the [lossless Blu-Ray files] you could store on those bad boys, in RAID 1 no less."

Regardless of how many drives were used, there is a logistical problem with sharing this much information. Obviously, it can't be sent via the internet. Instead, the drives were crated and flown to Boston and Germany for processing.

The data was then pieced together by an algorithm written by Katie Bouman. Blank spots were filled in by the software. Bouman, who is an assistant professor at the California Institute of Technology explained the process back in 2016 when she was still an MIT student (above).

"If we want to see the black hole, we need a telescope the size of the Earth [like a disco ball]," Bouman said. In other words, mirrors covering the entire planet, or at least a hemisphere.

However, having only eight mirrors limits what can be seen. So data was collected from each telescope every day throughout the day for an entire week.

"As the Earth rotates, we get to see other new measurements," she continued. "In other words, as the disco ball spins, those mirrors change locations, and we get to observe different parts of the image. The imaging algorithms we developed fill in the missing gaps of the disco ball in order to reconstruct the underlying black hole image."

This is why the image took up five petabytes of data. Could you imagine the data involved if it were possible to cover the Earth with mirrors to "see" the whole image?