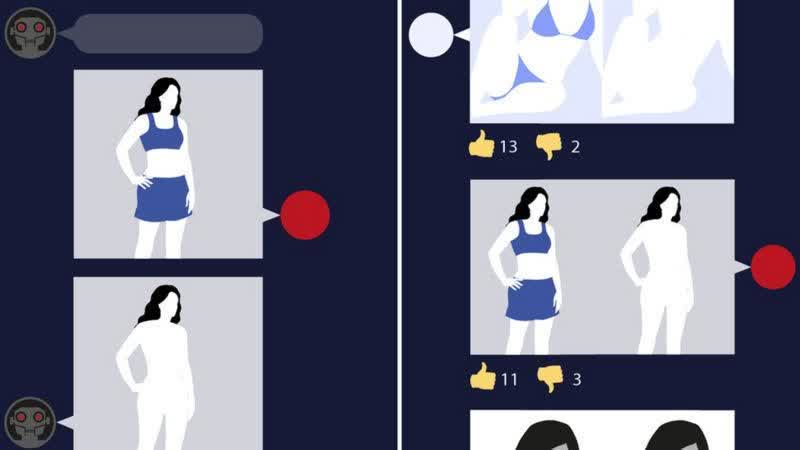

WTF?! A report by intelligence group Sensity, who research faked images and other malicious visual media, revealed that a deepfake bot was made freely available on the messaging platform Telegram. With this bot, users publically released nude deepfakes of thousands of women based on widely available social media photos.

A recent report has uncovered over 100,000 faked nude photos of women based on social media images, which were then shared online.

This type of deepfake technology is no new feat. Similar software has unfortunately been used to produce fake pornographic content of celebrities for years.

This dramatic new increase in images stems from a "deepfake bot" residing in the private channel of the messaging app Telegram. Users can send innocuous photographs of women, and the bot will disrobe them and spread them on the messaging platform.

The service's administrator, known as "P" online, responded to this reporting: "I don't care that much. This is entertainment that does not carry violence." P went on to say that the quality of images was unrealistic enough that it would not be used for blackmail and downplayed the application's harm, stating, "There are wars, diseases, many bad things that are harmful in the world."

Sensity, the intelligence company researching this recent deepfake uplift, stated that between July 2019 and 2020, a reported 104,852 women were targeted by publically shared images. Follow-up research into pages on which the deepfake bot was advertised revealed that this number might actually be closer to an unbelievable 680,000 people targeted.

"Having a social media account with public photos is enough for anyone to become a target," warned Sensity's chief executive, Giorgio Patrini. While the technology is not novel, Patrini says targeting private individuals is a "relatively new" practice.

Reporters at the BBC have tried this bot with consent from participants and found the results to be "poor," including one image of a "woman with a belly button on her diaphram."

Some of the images revealed in this investigation reportedly appeared underage, "suggesting that some users were primarily using the bot to generate and share paedophilic content."

As administrator, P has said that pedophilic content is deleted if it is found and has even claimed he will soon delete all images shared on the platform.

Surveys of this bot-service's users showed 70% of the roughly 101,000 members were from Russian and ex-USSR countries where Telegram is a popular messaging app. Sensity's research also showed the bot advertised heavily on Russian media website, VK.

"Many of these websites or apps do not hide or operate underground, because they are not strictly outlawed," said Sensity's Giorgio Patrini. "Until that happens, I am afraid it will only get worse."

Victims of faked porn can be seriously impacted both personally and professionally, and as a result, certain states in the U.S. have banned the act, though it is not outlawed nationally.

A report from Durham University and the University of Kent described the state-of-play of legal protections around deepfakes and revenge porn in the UK to be "inconsistent, out-of-date and confusing."

The Sensity report's authors apparently shared this information with law enforcement and the platform and media groups in question but have not received any response.